Every security framework, every certification course, every vendor white paper tells you what you should do. Implement least privilege. Segment your network. Patch within 30 days. Enforce MFA everywhere. Use zero trust architecture.

All of this is good advice. In theory.

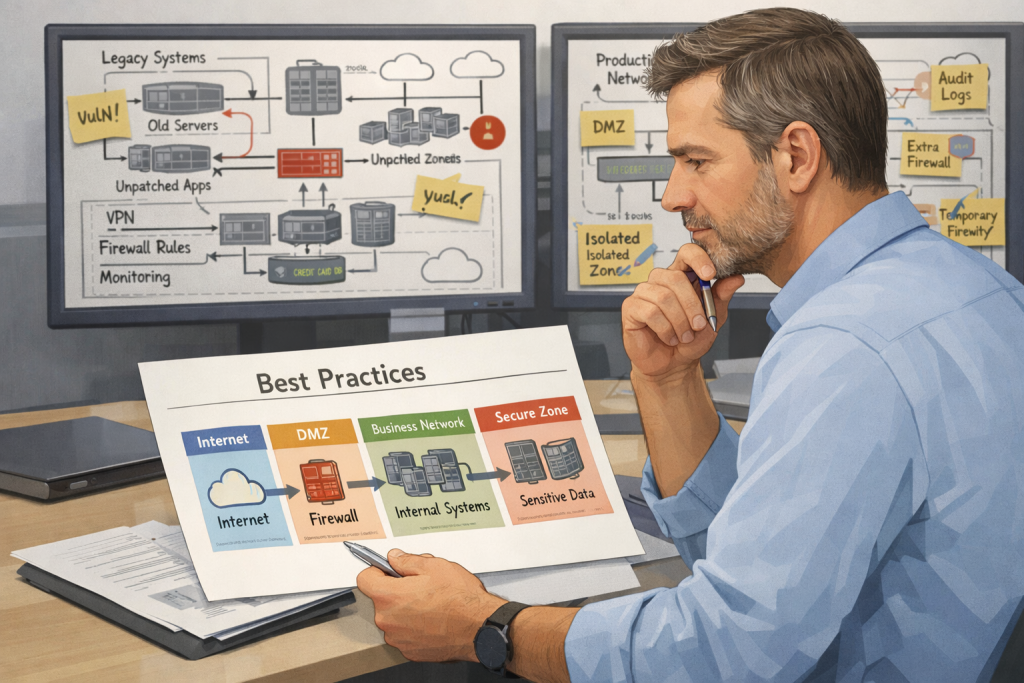

In practice, you’re working in an environment with legacy systems that can’t be easily changed, technical debt that accumulated over years, resource constraints that limit what’s actually achievable, and business requirements that sometimes conflict with security best practices.

So you’re left figuring out: when do I insist on the textbook approach, and when do I accept that we need a different solution that’s good enough given our constraints?

This is where judgment matters. Where experience matters. Where understanding the difference between “this is suboptimal but acceptable” and “this is actually dangerous and we can’t accept it” makes the difference between being effective and being either rigid or reckless.

The Legacy System Problem

You have a legacy application that’s critical to the business. It runs on an operating system that’s no longer supported. It can’t be upgraded because the vendor doesn’t support newer OS versions. It can’t be replaced because it would cost millions and take years.

Best practice says: don’t run unsupported operating systems. They don’t get security patches. Every vulnerability that gets discovered remains unpatched forever.

Reality says: this system is running business-critical processes and it’s not going away anytime soon.

So what do you do?

You can’t magically make the application work on a supported OS. You can’t wave a wand and get budget for a multi-million dollar replacement project. You can’t just turn it off because the business depends on it.

What you can do is implement compensating controls. Segment it so it’s not directly accessible from the internet or the general corporate network. Monitor it closely. Restrict access to only the people and systems that absolutely need it. Put additional layers of defense around it. Accept that the system itself is vulnerable, but reduce the likelihood and impact of that vulnerability being exploited.

Is this ideal? No. Is it acceptable given the constraints? Sometimes yes.

The judgment call is whether the compensating controls are sufficient to reduce the risk to an acceptable level. Sometimes they are. Sometimes they’re not, and you need to escalate and push for the replacement project even though it’s expensive and difficult.

The Technical Debt Trap

Technical debt accumulates. Applications get built with hard-coded credentials because that was expedient at the time. Service accounts get created with overly broad permissions because figuring out the minimum necessary access was time-consuming. Integrations get implemented in ways that work but aren’t secure because the deadline was tight.

Best practice says: fix all of it. Implement proper secrets management. Enforce least privilege. Rebuild integrations properly.

Reality says: you have finite resources and fixing all the technical debt would take years of dedicated effort that you don’t have bandwidth for.

So you prioritize. What technical debt creates the most risk? What’s easiest to fix relative to the risk reduction? What can be addressed incrementally versus what requires a big-bang fix?

You might decide that hard-coded credentials in production applications are unacceptable and need to be fixed even if it’s difficult. But hard-coded credentials in rarely-used internal tools are lower priority and can wait until you have time.

You might decide that overprivileged service accounts with access to production databases get fixed first. Overprivileged accounts in development environments get fixed eventually but not immediately.

This is triage. You’re making trade-offs based on realistic assessment of risk versus effort. Not because you don’t care about the other technical debt, but because you can’t fix everything at once and you need to focus on what matters most.

The Resource Constraint Reality

Best practices assume you have adequate resources. Budget for tools. Staff to implement and maintain controls. Organizational capacity for change. Leadership buy-in and support.

Most organizations don’t have adequate resources. You have to work with what you’ve got.

Maybe you’d like to implement a full SIEM with a security operations center. But you have budget for a basic logging solution and no headcount for analysts. So you implement what you can afford, automate what can be automated, and accept that your detection capabilities are limited.

Maybe you’d like to have dedicated security engineers embedded in development teams. But you have three security people for the entire organization. So you build security champions in the dev teams, provide guidance and tools, and accept that you can’t review everything.

Maybe you’d like to implement comprehensive security awareness training with simulations and role-based content. But you have budget for an annual basic training module. So you focus on the highest-risk behaviors and supplement with targeted communications about active threats.

Maybe you’d like to enforce stronger access controls across legacy systems. But leadership doesn’t see it as a priority and won’t support the organizational change required. So you focus on the highest-risk systems where you can make the case, document the gaps in the rest, and work incrementally toward broader coverage when you can build more support.

None of this is ideal. But it’s making realistic trade-offs based on actual constraints.

The mistake would be doing nothing because you can’t do everything. Partial implementation of security controls is still better than no implementation.

The Business Requirement Conflict

Sometimes business requirements genuinely conflict with security best practices.

The business needs to share data with partners who have weaker security practices than you’d like. Best practice would be to only share with partners who meet your security standards. Business reality is that you don’t always get to choose your partners—sometimes the business relationship is critical and you have to work with what you’ve got.

The business needs to enable a workflow that requires more privileged access than you’d ideally grant. Best practice would be to redesign the workflow. Business reality is that redesigning the workflow would affect revenue-generating processes and isn’t happening.

The business needs to deploy a new feature on a tight timeline that doesn’t allow for complete security review. Best practice would be to never deploy without thorough security assessment. Business reality is that missing the market window has costs too.

In these situations, your job isn’t to just say no. It’s to understand the business requirement, assess the risk it creates, and figure out what mitigations are possible given the constraints.

Maybe you can’t redesign the partner integration, but you can limit what data is shared and monitor the integration closely. Maybe you can’t change the privileged access requirement, but you can add additional logging and alerting. Maybe you can’t delay the feature launch, but you can implement basic security controls now and plan for improvements in the next release.

You’re not accepting risk blindly. You’re making informed trade-offs with appropriate mitigations.

The “Good Enough” Threshold

How do you know when something is good enough versus when it’s unacceptably risky?

There’s no formula. It’s judgment based on understanding the specific risk, the specific environment, and the specific constraints.

Some factors that matter:

Exposure. Is this accessible from the internet, or is it internal-only? Is it in a DMZ, or is it on the general corporate network? Exposure level changes the risk calculation significantly.

Data sensitivity. Does this system handle customer PII, financial data, health information? Or is it internal operational data that’s not particularly sensitive? Risk to sensitive data raises the bar for what’s acceptable.

Likelihood of exploitation. Is this a known, actively exploited vulnerability? Or is it a theoretical weakness that would be difficult to exploit in practice? Active threats raise urgency.

Compensating controls. What other layers of defense exist? If this control is weak but there are multiple other controls that would prevent the same attack, that’s different from this being a single point of failure.

Cost and complexity of improvement. Is there a straightforward fix, or would proper remediation require major architectural changes? Sometimes “good enough” is what’s achievable, and perfect is years away.

Organizational risk tolerance. Different organizations have different appetites for risk based on industry, regulatory environment, and business model. What’s acceptable in a startup is different from what’s acceptable in a bank.

The judgment call is weighing all of these factors and deciding whether the current state is acceptable or whether it needs to be escalated and addressed despite the difficulty.

When to Insist on Best Practice

There are situations where you shouldn’t compromise.

Cryptography. Don’t accept weak encryption because it’s easier to implement. Don’t accept custom cryptography because someone thought they could do better than standard algorithms. This is an area where best practices should be followed strictly because the consequences of getting it wrong are severe and the expertise required to do it correctly is specialized.

Authentication to critical systems. MFA for administrative access to production systems, financial systems, systems containing sensitive data—this is non-negotiable. The risk of credential compromise is too high and the mitigation is well-understood and achievable.

Critical vulnerabilities in internet-facing systems. If there’s a known, actively exploited vulnerability in a system that’s directly accessible from the internet, that needs to be fixed. Not eventually—now. The risk is too high to accept even temporarily in most cases.

Compliance requirements. If something is required for regulatory compliance and there’s no waiver or alternative, you have to do it. The consequences of non-compliance are not acceptable.

Obvious security debt in new projects. If you’re building something new, build it right. Don’t accept hard-coded credentials or missing authentication or SQL injection vulnerabilities in new code. Technical debt in legacy systems is a reality you inherit. Technical debt in new systems is a choice.

The common thread is: where the risk is high, where the remediation is achievable, where there’s no legitimate reason not to do it properly—insist on best practice.

When to Accept Trade-offs

There are also situations where accepting something less than ideal is reasonable.

Legacy systems with compensating controls. If the system can’t be fixed immediately but the risk can be mitigated with other layers of defense, that’s often acceptable.

Low-risk systems with low-priority findings. Not every vulnerability needs immediate remediation. Low-severity findings in low-risk systems can be scheduled for when resources are available.

Partial implementation while full implementation is in progress. If you’re rolling out MFA but it takes time to implement everywhere, having it on the most critical systems first and expanding coverage over time is reasonable.

Business-critical processes that can’t be interrupted. If proper remediation requires downtime during a critical business period, sometimes you accept the risk short-term and schedule the work for a maintenance window.

Resource-constrained environments doing the best they can. If an organization genuinely doesn’t have the resources to implement everything properly, focusing on the highest-risk areas and accepting gaps in lower-risk areas is pragmatic.

The key is being honest about what you’re accepting and why. Documenting it. Making sure decision-makers understand the risk. And having a plan for improvement even if it’s not immediate.

The Communication Challenge

When you’re accepting something that’s not best practice, you need to communicate that clearly.

Not: “This is fine.”

But: “This is not ideal. Here’s the risk. Here’s why we can’t fix it immediately. Here’s what we’re doing to mitigate the risk in the meantime. Here’s the plan for proper remediation.”

That transparency is important. It makes sure people understand what they’re accepting. It documents your professional opinion. It shows you’re being realistic, not just rubber-stamping everything.

It also positions you as someone who understands constraints and works within them, rather than someone who just says no to everything that’s not textbook perfect.

Avoiding Rationalization

The danger in accepting trade-offs is that it can become a slippery slope. Every deviation from best practice comes with a rationale. Eventually you’re accepting things that really aren’t acceptable, and you’ve rationalized it as pragmatic.

The check against this is periodic review. Are the temporary mitigations actually temporary, or have they become permanent? Are the compensating controls still in place and effective, or have they degraded? Are the plans for eventual remediation actually moving forward, or have they been indefinitely delayed?

If “temporary” means “indefinite” and “we’ll fix it later” means “we’ll never fix it,” then you’re not making pragmatic trade-offs—you’re accepting poor security and calling it realistic.

Be honest with yourself about this. Accepting imperfection within a clear improvement plan is pragmatic. Accepting imperfection with no intention of improvement is just accepting poor security.

Building Toward Better

Even when you’re accepting trade-offs, you should be working toward improvement.

That means documenting what’s not ideal and why. Maintaining a list of technical debt and security gaps. Having a plan—even if it’s a multi-year plan—for addressing them.

Put this in your risk register. Document each accepted risk with the reasoning, the compensating controls, and the plan for eventual remediation. This helps you prioritize—you can focus on the riskiest items first, but you can also identify the quick wins: lower-cost fixes that mostly need human time rather than budget.

And here’s an important signal to watch: the trend over time and the severity distribution. If you’re early in your security program and doing discovery, your risk register will grow—that’s expected. You’re finding historical issues that have been there all along.

But if you’re in steady-state operations and your risk register keeps growing quarter over quarter, especially with high or critical severity items, that tells you something. You’re not making pragmatic trade-offs anymore—you’re falling further behind. New risks are being introduced faster than you can remediate existing ones.

Similarly, if your risk register has 500 items but they’re mostly low severity with compensating controls, that’s a different situation than 50 items that are all high severity with inadequate mitigations.

That’s information leadership needs to see. A growing count of high-severity accepted risks becomes evidence that current resource levels aren’t adequate for maintaining reasonable security posture.

Beyond tracking the risk register, your focus should be on forward movement:

It means making incremental progress. Even if you can’t fix everything, fixing the worst things makes the overall posture better.

It means building security into new projects properly so you’re not accumulating more debt. The existing debt might be a reality you inherit, but at least you’re not making it worse.

And it means advocating for the resources to do things properly. If you’re constantly accepting trade-offs because you don’t have adequate resources, that’s information leadership needs to hear. They might not fund everything you ask for, but they should understand the gap between current state and adequate security.

Practical Takeaways

Best practices are guidance, not absolute rules. They assume conditions that don’t always exist.

Legacy systems and technical debt are realities. Focus on compensating controls when immediate remediation isn’t feasible.

Resource constraints are real. Prioritize based on risk versus effort. Partial implementation beats no implementation.

Some business requirements conflict with security best practices. Your job is to mitigate risk within constraints, not just say no.

Good enough depends on exposure, data sensitivity, likelihood of exploitation, compensating controls, and organizational risk tolerance.

Insist on best practice for cryptography, authentication to critical systems, critical vulnerabilities in exposed systems, and compliance requirements.

Accept trade-offs when risk is lower, when remediation isn’t immediately feasible, or when resources are constrained—but document what you’re accepting and why.

Communicate clearly about risks being accepted and plans for improvement. Transparency matters.

Avoid the rationalization trap. Temporary should actually be temporary. Review regularly whether mitigations are still in place. Make incremental progress toward better security even when you can’t fix everything immediately.

Podcast: Download (Duration: 19:43 — 10.7MB) | Embed

Subscribe to the Cultivating Security Podcast Spotify | Pandora | RSS | More